Tuesday, August 24, 2010

Sensitivity, specificity and predictive power

There was quite a buzz last week about a study showing a 15 minute brain scan could diagnose autism with "an accuracy rate of 90%" (1,2,3,4 ...). Almost every news outlet that ran with the story seemed to think this new test would pave the way for a quick and efficient screening program for autism. But, as Carl Heneghan at the Guardian pointed out, the ability of a test to correctly diagnose someone with a condition is only one factor that contributes to how useful a test will be. The maths underlying the difference between the accuracy of a test and it's predictive power is famously difficult to grasp, so I thought pretty pictures might do the trick.

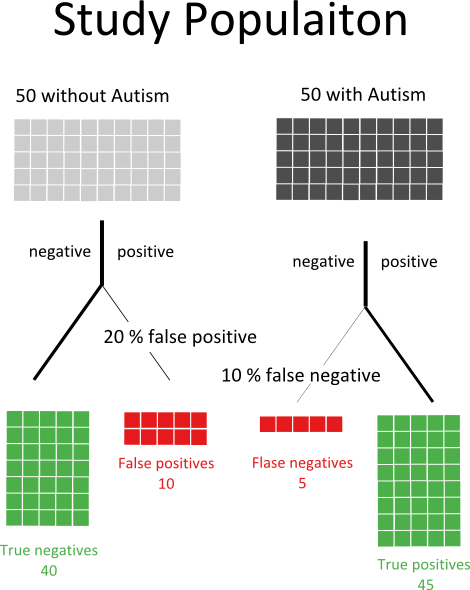

50% of the participants of the study that spawned all the fuss had autism, the rest did not. The 90% figure that made the headlines is ability of the brain scan to corroborate the autism diagnosis among the 50% of participants already diagnosed with autism. In other words, the test has a 10% false negative rate. The ability of a test to correctly assign someone with a condition to that condition is called the sensitivity. All of the headlines, and most of the stories, that reported the story failed to mention that the test is worse at picking non-autistic brains from scans. It has an 80% chance of correctly identifying someone as non-autistic (a 20% false positive rate), we call that measure the specificity . So, what do those two rates mean when we run the tests on the study participants? If there were 50 autists and 50 non-autitsts you end up with results like this:

Now, imagine you were in this study population, you didn't know if you were autistic or not, and you got a positive result. How sure could you be that you actually had autism? You might think the answer was 90%, afterall the test is 90% accurate right? In fact, you have to consider the possibility you are one of the ten people we expect to get a false positive result. The probability of having autism if you have a positive test is actually the number of true postives divided by the total number of positives:

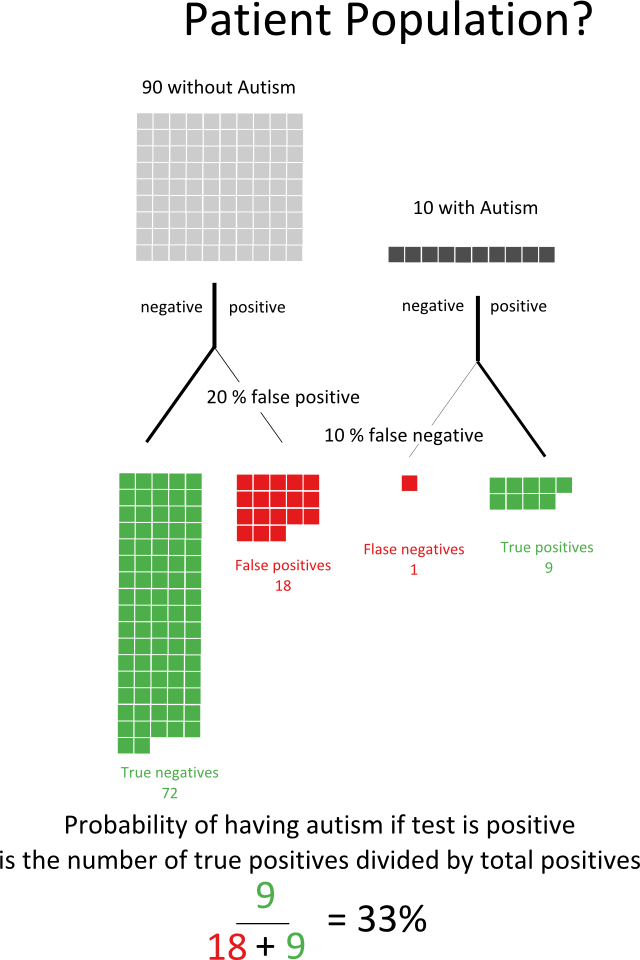

So, the test is pretty good but once you include the chance that you have a false-positive the predictive power of the test goes to about 82% . It get's much worse. In the example above we started with autism rate of 50%, in the real world it's something closer to 1%. Let's presume that we aren't going to start a massive screening program for autism, and instead say that 10% of the people referred to doctors for perceived problems in social and educational development actually have autism. How would out test work on that patient population?

Now if you got a positive result you could still say you probably don't have autism! The predictive power of the test has fallen to 33%. It get's even worse when you think of actually screening the whole population - if 1% of people tested for autism actually had autism then the predictive power of this test would fall to 4.5%.

This problem isn't limited to the new autism scan - any test for a disease which is rare will need to have a very high sensitivity and especially specificity for it to be useful. You sometimes hear people complain that the like of a prostrate cancer screening program using the Prostate Specific Antigen (PSA) test is part of the medical professions attempt to alienate men. In fact, the PSA test has a low specificity. If you were to screen all men for PSA you would get a lot of false positive results, which would lead to a lot of unnecessary biopsies. That's not just an expensive problem, any medical intervention carries a risk so those biopsies would be placing large numbers of men at risk for no good reason. That's not to say the PSA test doesn't have it's uses, if instead of screening all men you only test those who already have a higher risk of prostate cancer you will descrease the number of false positives and the test's predictive power will increase.

Labels: pretty data, sci-blogs, science, science communication, statistics